Synth-Aesthesia: CeReNeM’s Future Sounds

In a large post-industrial town in West Yorkshire, researchers at the Centre for Research in New Music are pushing the boundaries of recorded sound

“What we specialise in here in Huddersfield is composers using technology to make things that are not possible otherwise,” says Pierre Alexandre Tremblay, director of the electronic music studios at the University of Huddersfield and a professor of composition and improvisation within its Centre for Research in New Music (CeReNeM).

A large post-industrial town in West Yorkshire, Huddersfield is known internationally in the world of modern composition for the annual Huddersfield Contemporary Music Festival (hcmf//), which was founded in 1978 by one of the university’s music lecturers, and where the music of 20th-century cultural iconoclasts such as Cage and Stockhausen brushes spikily up against that by newer experimentalists.

At CeReNeM, however, the “new music” in question isn’t confined to contemporary composition – although there’s plenty around, with centre director Liza Lim and staff such as Bryn Harrison and Philip Thomas all combining their academic roles with acclaimed careers writing and performing. It also encompasses musicians such as Tremblay, whose background in punk and rap production informs a fascination with gesture, virtuosic precision and improvisation; Monty Adkins, whose meditative electronica finds a home on Sheffield ambient label Audiobulb, and postgraduate researcher Ryoko Akama, creator of minimal and playfully conceptual sound art and sometime collaborator with synth pioneer Eliane Radigue.

Crucially, the atmosphere of cross-pollination in which graphic scores and laptop-built Max patches spring up alongside circuit-bending, improvisation and audiovisual installations is supported by facilities that allow staff and students alike to push sound as far as their imaginations can travel.

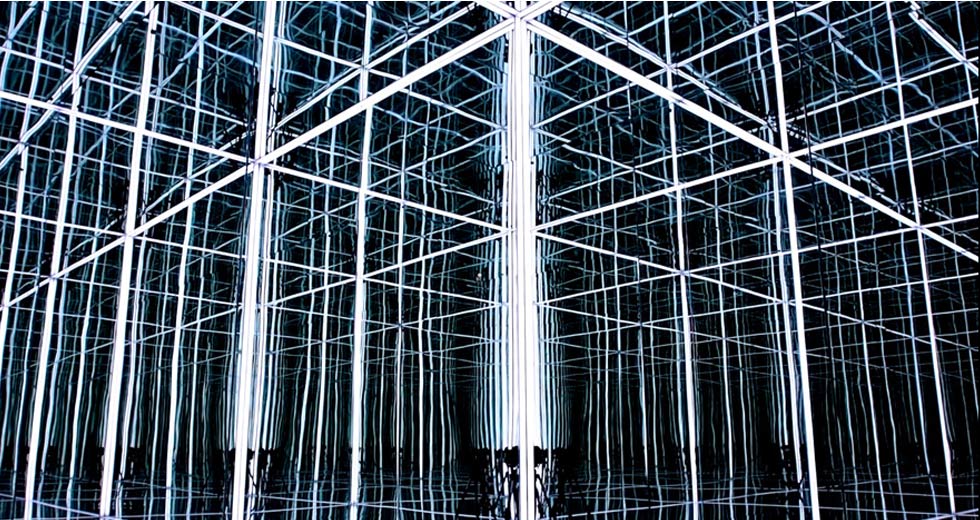

A key tool at CeReNeM is HISS (Huddersfield Immersive Sound System), the loudspeaker orchestra that Tremblay describes as “a super-surround experiment” offering 48-channel sound, top-end microphones and radiating, multi-level loudspeakers, mixed through a desk offering the flexibility of Max/MSP’s modular system as well as video capabilities.

“There’s a lot of gear addiction [in electronic music]; musicians will moan that they don’t have access to resources. Here, no one can use the gear myth. They have access to the mythical stuff, so if it sucks, it’s their fault.”

The head-spinning diffusion powers of HISS are showcased annually at the Electric Spring festival of electronic music organised by Tremblay and Adkins, at hcmf//, and in other concerts at the university. For Tremblay, however, concentrating too hard on the equipment available misses the point. “The HISS is a lab, but it’s also a community of people who surround it,” he says. “The HISS approach to technology is that fundamentally it has to become irrelevant. It’s a bit like an aeroplane: you don’t want to think about whether someone forgot a bolt somewhere. You want it to be sturdy, solid and transparent so that you can focus upon your travel.” Such sonic precision can cast an unforgiving spotlight upon the work of emerging composers. “There’s a lot of gear addiction [in electronic music]; musicians will moan that they don’t have access to resources. Here, no one can use the gear myth. They have access to the mythical stuff, so if it sucks, it’s their fault.”

Beyond its role as a 3D sonic sandbox for staff and students, what does a facility like HISS, or similar systems such as Birmingham’s BEAST or the Sonic Lab at SARC, Belfast, have to offer the wider world of music? One concrete example is the HISSTools Impulse Response Toolbox (HIRT), a set of freely downloadable external objects for Max/MSP created jointly by Tremblay and his CeReNeM colleague Alex Harker that allows users to program sophisticated loudspeaker room correction in concert settings.

The precursor to HIRT was Thinking Inside the Box, Tremblay’s project to help electronic composers avoid nasty acoustic surprises by imbuing sonically near-perfect studios with an accurate image of the less controlled space awaiting in the concert hall. “The technology is used to take an imprint of reality, which is very far from ideal, and bring that into a studio,” he says.

HIRT performs the inverse role, allowing composers to fine-tune the performance of multichannel works. “Of course, you can do this manually, if one frequency in the concert hall is too nasal or if it’s a bit top heavy or bass shy. But the tools we’ve developed allow you to do it much more precisely, for the full spectrum.”

“A lot of what I do builds on things that are pre-existing in some form, but providing easier access to them”

Both Tremblay and Harker stress that much of HIRT was built upon science that already existed, but which was inaccessible to musicians lacking specialised programming skills. “A lot of what I do builds on things that are pre-existing in some form, but providing easier access to them,” Alex Harker says. Creating HIRT took him 18 months of full-time coding as Tremblay’s postdoctoral researcher, but beyond the mass of formulae and algorithms that he grappled with, its innovation also stems from his idea of using Max/MSP’s modularity to break down the impulse response tasks into their component stages, sidestepping the restrictions placed upon users by boxed-in commercial reverbs.

“By creating a toolbox of all these objects, he opened a beautiful can of worms, where you can optimise something or voluntarily mess it up or remove a step,” Tremblay enthuses. “It started to get the creative brain going.” One such worm has wriggled its way into laptops the world over in the form of a convolution reverb included with Ableton Live 9. Built by Harker using the HISSTools, it allows users to digitally simulate the impulse response of their performance space – its unique reverb character – or treat their sounds with IRs from a menu of more than 200 concert halls, effects and pieces of vintage gear.

For Harker, who started out on the more conventional music degree route of violin and paper-based composition before honing his skills in Max and the C language on his Masters degree, programming is the key to giving form to the musical shapes in his head. One example is his 2011 piece “Fluence,” in which a computer duets with a lyrical clarinet part, drawing upon a bank of around 1600 samples in real time and using digitally determined criteria to create a tapestry of percussion and ambient sounds that reweaves itself with every performance.

“I feel there’s a newer generation of practitioners who have grown up with musical training and an interest in programming,” he reflects. “It’s not the model where you have a musician and a programmer come together and try to make a creative project. In a big institution, there are a lot of people who are self-reliant.”

In a way musicians like Harker are the children of the ’70s generation of electronics enthusiasts, who built their own synthesisers by painstakingly soldering together each component. One such circuitry whizz turned composer is CeReNeM’s electroacoustic studio manager Dr Mark Bokowiec, who was there when the university assembled its first studio in 1979.

These days Bokowiec is the proud caretaker of SPIRAL – Spatialisation and Interactive Research Lab – the 25-channel studio that offers a 3D canvas for staff and students to paint with sound. As well as facilitating the sonic abstraction of electroacoustic composition, SPIRAL is also vital as a testing ground for research into using controllers in live performance.

On the one hand, the mass availability of motion-sensing gaming systems mean that it’s never been easier to convert gestures into spatialised music and many of Bokowiec’s students develop projects using Wii remotes or other controllers. Yet CeReNeM also offers the kind of environment where much more complex approaches such as his own Bodycoder system can flourish. Created in conjunction with his wife, Julie Wilson-Bokowiec, the Bodycoder allows performers to control music with their physical movements – or, as Bokowiec puts it, “on-the-body diffusion.”

Having evolved from its 1990s incarnation as a set of sensors embedded into a latex suit that Wilson-Bokowiec would wear to dance, the most recent incarnation of Bodycoder sees her controlling her own voice in real time, using signals from on-off switches and proportional sensors (connected to joints such as elbows), wirelessly transmitted to a receiver and processed using Max/MSP. A finger movement is sufficient to sample a stretch of singing; a second will loop it and send it for diffusion through a multichannel speaker array.

Another Huddersfield musician to recognise the potential offered by control technology is cellist Seth Woods, whose PhD research at CeReNeM explores the shared physicality of instrumental performance and dance. During a stint as researcher in residence in the CIRMMT Institute at Montreal’s McGill University, he linked up with Ian Hattwick and Joseph Malloch, creators of a prosthetic spine that now forms the focus of his current work.

Fashioned from acrylic and with more than a touch of HR Giger about it, the spine piggybacks on the wearer with the help of a headband and flexible corset, as sensors inside record Woods’ cello-playing movements. “It’s based upon bend and twist parameters, which in some ways is simple, but mapping and getting the computer to acknowledge minute gestural movements as well as the larger ones is always difficult when you’re dealing with many different areas of gesture,” he explains.

Woods’ recent piece Almost Human demonstrates the reward for all that calibration and adjustment. Using the spine to capture his movements while playing a range of cello works ranging from Bach to Ferneyhough, he analysed them using a Qualysis motion capture system and Laban choreographic notation. Scaling up the gestures to full-body dance movements, he then fed them back into the spine.

“It’s almost like muscle memory,” he says. “You’re taking an instrument that’s brand new and has no performative or physical language, and trying to give it one. I took the background I have in dance and the one I have in instrumental performance and tried to forge those onto the instrument, to see what it really wants to do and what it can do.” The resulting work has two halves: in the first, the spine takes in data as Woods plays, which is used to stretch and granularise the strings’ sound. In the second, he lays his cello aside and dances the scaled-up gestures, further manipulating and augmenting the sampled music.

“You’re taking an instrument that’s brand new and has no performative or physical language, and trying to give it one.”

“Almost Human is half-cyborg, half-human, so in a way I’m questioning the idea of how and why we move. Would we move differently if we had a second skin? If we took our own spines and attached them on the outside, would we rotate or would we float though life very differently?” Working with Hattwick and Malloch’s spine has certainly given Woods a taste for the possibilities that technology offers to musicians using traditional instruments. “The next thing for me is to try to design and create an interface which I can use as one large unit to express myself electronically as well as acoustically.” For now, he is working with Bokowiec on a new piece incorporating both the spine and the Bodycoder in which he and Wilson-Bokowiec will control each other’s sounds.

Harker, meanwhile, has further additions to the HISSTools collection in mind, and is currently thinking through ways to improve frame or block processing, the “various kinds of processing where you cut the sound up into chunks or frames of some kind, such as spectral type processing, where in order to see the spectrum you have to have a slice of time.” His solution would solve current trade-offs that have to be made between the respective qualities of frequency and timing data.

He can easily remember a time when such projects would have been unthinkable. “When I was an A-level student, we used to get excited about computers that could record four or eight tracks at once,” he recalls. Considering technology’s progression during his academic lifetime, how has it changed the way that he thinks about music?

“I think there are several ways in which that has happened,” he says. “One limitation is simply processing, where you could dream what you want to do but the computer simply wasn’t fast enough to do it in real time. For a lot of people those boundaries are dissolving so it’s hard for them to dream enough stuff for the computer to do.”

For him, however, the biggest difference is his move from being a user of software to a programmer. “For a programmer, a computer is a tool, a means to allow you to do what you intend to do. And you can do some very esoteric things. It’s probable that no one is going to build a piece of software that works in the way that I think about music, except for me.”