Instrumental Instruments: Auto-Tune

Our series on important music-making devices continues with the infamous software, whose primary function is infinitely broader and much trickier to discern than its signature robotic effect

In the fall of 1995, Dr. Andy Hildebrand spent a month in bed. He wasn’t sick, though: he was thinking. Hildebrand intermittently emerged from under the blankets and walked down the hallway to his office. Through the windows, sunlight filtered through the ancient redwood forest of coastal California. He transferred the numerical codes in his head onto a computer, observed how they reacted and then returned to his bedroom to contemplate the response. Days passed, then weeks. Eventually, during one of these voyages down the hall, the web of numbers transformed into a functioning algorithm.

Hildebrand didn’t know it, but he had just redirected musical history. The path this bookish inventor had forged would soon be populated with real and wannabe pop stars, rap legends, dance world cyborgs, media critics, indie rockers, Cher, an R&B singer with a predilection for top hats and thousands of other artists who have kept low profiles as they used, and abused, the system that had emerged from Hildebrand’s mind during those quiet days in the redwoods.

The basis of this sonic revolution is, on paper, fundamentally mundane: Hildebrand’s equation worked when an element called auto-correlation was triggered by the repetition of an incoming signal. These repeated signals indicated pitched data. By measuring the distance between the waveforms of the signal, irregular pitches could be corrected, resulting in more uniform output.

Put simply, Hildebrand had invented Auto-Tune.

“Usually I lay low to the world,” he says on the phone from his house in Scotts Valley, California. “You don’t want to sound like an idiot [when someone says] they hate Auto-Tune. Like, ‘Yeah, I’m the guy who invented it.’”

While Auto-Tune’s signature robotic effect made it infamous, its primary function is infinitely broader and much trickier to discern. Critics argued that Auto-Tune’s ability to instantly perfect vocal and solo instrumental performances sucked the soul of out of music. The music industry answered with a definitive “whatever,” and continued its sweeping applications of the program. 20 years after its release, the pitch-correction device is indispensable in studios and live shows. This ubiquity is betrayed by the fact that most vocalists who use Auto-Tune will never admit it, preferring listeners believe their impeccable performance was a result of natural talent rather than digital manipulation.

“I definitely think Auto-Tune is ubiquitous,” says Laura Escudè, who has done programming, arrangements, vocal effects and live show design for artists including Kanye West, Bon Iver and the Weeknd. “I think almost every big pop artist used it at one time or another. There are people out there that definitely don’t need it, but use it anyway as a safety net.”

Purists remain offended that people with access to the software are able to manufacture singing ability, but technological perfection of the human voice was an inevitable aspect of the digitization of music beginning in the 1970s. Hildebrand simply patented the technology first.

Hildebrand earned a Ph.D in electrical engineering from the University of Illinois in 1976. He entered the oil industry after graduating, working at Exxon for three years before co-founding his own company, Landmark Graphics. This business dealt in seismic data exploration, using underground explosions and audio signal processing to map the Earth’s subsurface and locate oil deposits. Hildebrand casually notes that this system “changed the oil industry” by making it vastly easier to find and extract fossil fuels, and he’s not joking. In 1996, Landmark Graphics was purchased by Halliburton, the multinational oil corporation then run by future Vice President Dick Cheney. The deal was valued at $525 million.

Then in his early 40s and suddenly rich, Hildebrand retired. A childhood flute prodigy and one-time session musician, he had always been interested in music and went back to school to study musical composition at Houston’s Rice University. Here, he found crossover between his previous career and new intellectual endeavors.

“There’s a lot of standard techniques [in signal processing],” Hildebrand says. “I applied some of those techniques in oil exploration and applied some very similar and related techniques in music processing, including Auto-Tune.” The key to both seismic data exploration and Auto-Tune is the auto-correlation, the aforementioned equation that indicates when a signal repeats itself, thus identifying pitched data.

Hildebrand left Rice after a year to focus on the technology he had dreamed up in bed, and his invention debuted at the 1997 National Association of Music Merchants (NAMM) conference. While he wasn’t convinced Auto-Tune would be a hit, the conference hinted at the massive success that would follow, with people in the crowd literally grabbing copies out of his hands. With the push of a small marketing team, the first incarnation of Auto-Tune was released by Antares, a company Hildebrand had co-founded years earlier. Within a few months, every major studio in the United States and beyond had purchased a copy. Pandora’s voice box was open.

One of Auto-Tune’s sexiest features was speed. Previously, the only way to get a perfect vocal take was to have a singer perform over and over to ensure every note, breath and sense of emotional urgency had come out well at least once. The producer would then begin a tedious process called comping. Still used today, this method allows the producer to cut and paste the best moments of an audio session into one take, creating the illusion of a single flawless performance. With Auto-Tune, the depth of this illusion was intensified as wonky vocals were fixed in real time. Producers could use the software to manually correct any remaining imperfections, reducing studio sessions from days to hours.

“When you think about the economics of the sound studio,” Hildebrand says, “Once two or three studios had it, they all had to have it.”

The program’s infamous quirk lies in the plug-in’s retune speed, or how quickly Auto-Tune takes over to correct the pitch.

A year after its debut, Auto-Tune was made infamous by a function it was never intended to perform. In the late ’90s, Warner Bros. decided that to make up for the disappointing sales of her previous LP, Cher would focus on her gay audience with a dance-focused album. The pop icon released her 22nd studio LP, Believe, in 1998, and its title track featured a chorus in which the diva’s voice briefly shifted from that of a human woman to vaguely female cyborg. The song’s co-producer, Mark Taylor, discovered the effect after the Auto-Tune program had arrived at his UK studio.

According to the New York Times, Taylor was initially nervous Cher would throw a tantrum about him messing with her vocals, but, he said, “a couple of beers later we decided to play it for her, and she just freaked out.” The label wanted Cher to drop this flourish. She refused. (She told the Times: “I said, ‘You can change that part of it over my dead body!’ And that was the end of the discussion.”) The bubblegum club anthem introduced the “Auto-Tune effect” to the world as it hit #1 in 17 countries. Sales surged as hobbyists picked up the technology for home use.

“I didn’t think anybody in their right mind would ever use that [effect],” Hildebrand says. He was profoundly wrong.

Auto-Tune works in two ways. The graphical mode allows producers to plot the singer’s pitch on a computer screen and manually change that by pitch drawing a new one in. (Melodyne, another pitch correction plug-in that hit the market in 2001, offers a similar function.) The automatic mode scans the incoming material, identifies when the pitch differentiates from the pre-determined scale, finds the note the singer is closest to and moves the voice to that note, mechanically polishing a performance in a way that sounds natural.

The program’s infamous quirk lies in the plug-in’s retune speed, or how quickly Auto-Tune takes over to correct the pitch. When the speed is turned to zero, the software moves faster than it was built to, correcting the pitch at the very beginning of the note and locking the overall pitch to that note, stripping any variations and forcing instant transitions. The effect, technically called “pitch quantization,” is now so standard that Antares notes how to achieve it in each Auto-Tune product manual.

With it, the artist born Faheem Najm became the Auto-Tune effect’s greatest innovator and first public punching bag. As T-Pain, Najm made himself synonymous with synthetic vocals, beginning with 2005’s “I’m N Luv (Wit a Stripper),” the breakout single from his dubiously titled debut Rappa Ternt Senga. T-Pain (who has a legitimately excellent singing voice) dripped his odes to booze and booty in Auto-Tune, an arc that culminated with him playing a seafaring caricature of himself in a 2009 Saturday Night Live digital short.

T-Pain eventually sued Antares in 2011, claiming the company was using his name and likeness in ways he hadn’t agreed to. He had at the same time partnered with audio technology company iZotope to promote his own voice manipulation device, “The T-Pain Effect.” Packaging on another namesake product, the “I Am T-Pain Mic,” noted: “This product does not utilize the Auto-Tune technology owned by Antares Audio Technologies.” As part of the settlement, Hildebrand is not allowed to use the name “T-Pain” in public.

In the inherently forward-thinking electronic world, Daft Punk were early adopters, using the effect throughout 2001’s Discovery. In an interview from the same year, Thomas Bangalter noted that “A lot of people complain about musicians using Auto-Tune. It reminds me of the late ’70s when musicians in France tried to ban the synthesizer… People are often afraid of things that sound new.” In a 2001 profile in The Wire, Radiohead’s Thom Yorke discussed using Auto-Tune on the then just-released Amnesiac: “There’s also this trick you can do, which we did on both ‘Packt [Like Sardines]’ and ‘Pulk/Pull Revolving Doors,’ where you give the machine a key and then you just talk into it. It desperately tries to search for the music in your speech, and produces notes at random. If you’ve assigned it a key, you've got music.”

Producers of all genres began distorting their own vocal hooks. Snoop Dogg got on board with “Sensual Seduction,” Lil Wayne had “Lollipop” and Ke$ha got more famous than some believed she had the right to with her heavily Auto-Tuned 2010 breakout “Tik Tok.” It was around this time that everyone decided Auto-Tune was terrible.

The difference still is the talent. Even though the tool remains the same, your performance through that tool is still what has to be original.

The backlash to Auto-Tune’s obvious and invisible uses became a trend in and of itself. Christina Aguilera was photographed wearing an “Auto-Tune Is For Pussies” t-shirt (she later admitted to using it), while indie pop outfit Death Cab For Cutie wore blue pins to the 2009 Grammy Awards to protest what they called “Auto-Tune abuse.” The band’s bassist Nick Harmer forecasted that the technology would destabilize artistry itself, because “musicians of tomorrow will never practice. They will never try to be good, because yeah, you can do it just on the computer.” The following year, Jay Z won the Best Rap Solo Performance Grammy for “D.O.A (Death of Auto-Tune),” months before Time put Auto-Tune on its list of the 50 worst inventions. But if “real” artists were virtue signaling, studio pros were scrambling to distinguish themselves as the democratization of technology launched a million bedroom producers.

“I feel like Auto-Tune was the defining plug-in that separated me at a crucial time when separation was difficult to find in engineers,” says Atlanta-based engineer Seth Firkins, whose clients include Future, Drake, Kevin Gates and Rihanna. “Other engineers were quick to let the Auto-Tune go because there was such a backlash, but I didn’t. I felt like I knew how to really utilize it for an emotional response, and that served me so well that I had no reason to give it up.”

But like so many disruptive technologies, Auto-Tune soon normalized into just another way to experiment. Kanye West’s 2008 LP 808s and Heartbreak was soaked in the effect. (T-Pain was a consultant on the album.) Future made it his signature. (“I’ve never asked Future, ‘Do you want me to put Auto-Tune on your voice?’” says Firkins. “I just always do.”) It was easy to accuse Auto-Tune of stripping humanity from music, but with it, the possibility of deeper emotional connection was also revealing itself.

“There are certain artists that want to sing,” says Laura Escudè. “They want to express themselves in that way, but they don’t have the confidence because they’re not a trained singer. If [Auto-Tune] allows them to get their emotion out, I think that’s amazing.”

“It doesn’t feel like you’re cheating anymore,” Escudè continues. “It’s just another tool to be creative and make something sound more interesting. Take Bon Iver. He can sing, and he sounds amazing with no effects. Then you put Auto-Tune on him, and it opens up this whole other dimension.”

“The difference still is the talent,” Firkins echoes. “Even though the tool remains the same, your performance through that tool is still what has to be original. It does prove that not all the songs are built the same.”

A major advantage of Auto-Tune is that it can be used in live shows, delivering immaculate vocals in real time whether or not the performer is on top of their game. That said, when the live use of Auto-Tune backfires, it can do so spectacularly. Billy Joel found out the hard way when he performed “The Star-Spangled Banner” at the 2007 Super Bowl, and his use of the program was neither subtle nor aurally pleasing.

“I obviously think that performing live is the best way to go about things,” says Escudè. “But again, when you’re in a situation where the show must go on – artists are not indestructible. They get sick and tired. It’s physically exhausting to be on stage day in and day out. I don’t hate on [people using Auto-Tune] anymore.”

Last October, Hildebrand sold the company to a Los Angeles-based merchant bank. (Details of the deal were not disclosed.) Still based in Northern California, Antares employs a staff of nine and is now headed by CEO Steve Berkley, who calls Auto-Tune the company’s crown jewel. The most recent version, Auto-Tune 8, was released in 2014 and sells for $399, with simpler versions going for less. Cracked versions of the program are widely available online, further democratizing the field of music production that was opened up to bedroom producers and hobbyists with the rise of laptops and DAWS. Cher and Future bridged generations of Auto-Tune artists by performing an Auto-Tuned duet of Sly and the Family Stone’s “Everyday People” in a recent Gap commercial. Hildebrand is still writing algorithms and improvements like Flex-Tune, which further humanizes the system by allowing for intentionally off-pitch notes.

“This product has been incredibly successful. It’s ubiquitous. Everyone uses it,” says Berkley. “The question we’re asking now is, ‘What else possible?’”

Now in his 60s, Hildebrand is still problem-solving. He recently found a way to use audio signal processing to increase the effectiveness of pacemakers and says this technology could save lives if not for FDA barriers. But for as groundbreaking as his invention has been, it is Auto-Tune’s penetration of the most traditional realms that seems to most impress him.

“I was watching a PBS news broadcast from Islamabad,” he says, “and you could hear the call to prayer in the background was Auto-Tuned.”

Antares has always remained neutral regarding the swirl of controversy surrounding Auto-Tune, making the technology available without judging the ways it’s used. Much like fossil fuel extraction, the plug-in is a symbol of technology’s relentless march into the future. In music, at least, such innovation is ultimately part of a very old rhythm.

“Things that are new or different – new instrumentation, orchestration, different ways of performing, new ways to use dissonance – all create interest and excitement,” Hildebrand says. “Whenever that happens, you have the old crowd disapproving of the new sound. There’s a continuous cycle of acceptance, disapproval and criticism. These things are not new in the history of music.”

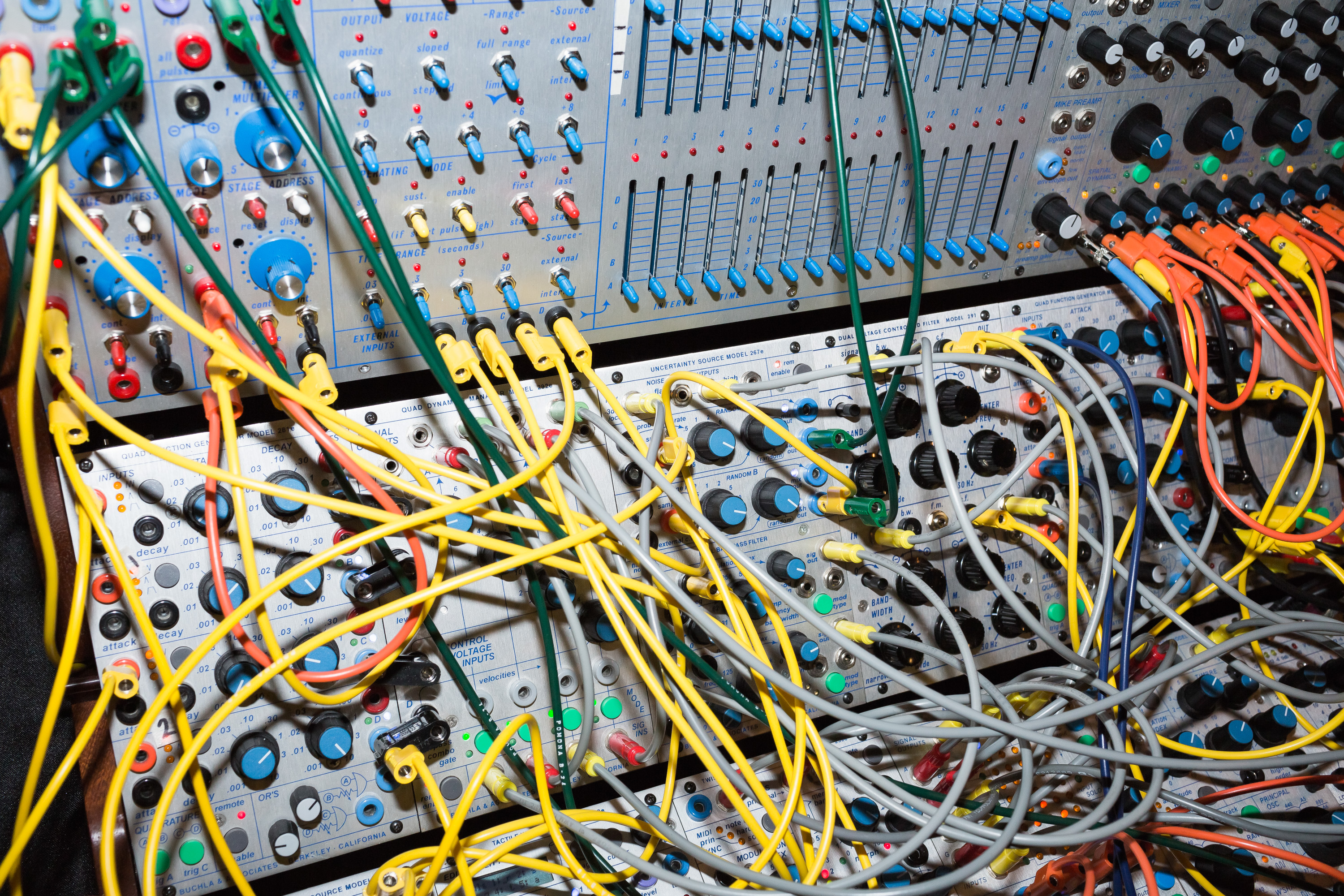

Header image © Johannes Ammler