Cryptography and Cyborg Speech: The Strange Journey of the Vocoder

Our series on important music-making devices continues with the vocoder, which played a major role in World War II en route to becoming a ubiquitous musical tool

What does a vocoder sound like? The instrument, which originated in telephone voice compression experiments of the 1920s, first and foremost brings to mind a specific time and space: electronic and funk music of the late ’70s. Few people realize that, in fact, we hear vocoders every day. The same technology that brought us Electric Light Orchestra’s “Mr. Blue Sky” and early hip-hop records like Jonzun Crew’s “Pac Jam” is at work in every cellphone on Earth. While the real-deal vocoder is often mistaken for similar technology like the talkbox or Auto-Tune, its hyper-competent descendants are all around us, their synthesis of the human voice now imperceptible. However, this technology has accomplished far more than enabling improved mobile communication. Between the early 20th century experiments that birthed the instrument and Kanye West, the vocoder played a major role as a cryptographic tool in the Second World War, while today similar technology is implanted in ears in the form of cochlear implants, finally transforming humans into the cyborgs we emulated using the very same device.

It all began with compression. When Alexander Graham Bell invented the telephone in 1876, the bandwidth his new device used was tiny. Only a small segment of the human voice got through the wires – early telephone users had to yell to be heard. Over the next few decades, the technology was refined until nearly whole sound waves were being sent through the telephone and reproduced on the other side, and in the 1920s, telephony began to take off in a big way. There was talk of creating a transatlantic undersea cable to bring phone calls from North America to Europe. This hypothetical cable would have only a few channels available to route all the calls between the two continents. In order to get the most out of this massive undertaking, scientists needed to find a way to fit as many phone calls as possible into the smallest broadband space.

Luckily, a scientist named Homer Dudley had already spent years trying to distill speech into its component parts. Dudley worked at Bell Labs, the same storied company that had produced the telephone itself and would go on to revolutionize communication technology. After observing a competition of lip readers, Dudley realized that much of the information we convey through speech doesn’t need any sound to be understood. “He thought, ‘If I could just figure out what those parameters [of speech] are, and send those down a telephone line, we would economize so much on bandwidth,’” says Mara Mills, an associate professor at New York University’s Steinhardt School of Media, Culture and Communication, who studies disability technology. “If you go through [Dudley’s] lab notebooks in the AT&T archives, you see that he [becomes] interested in somehow reducing the range of the voice in the late 1920s,” Mills says.

Inspired by artificial larynx technology, Homer Dudley created a synthesizer that could interpret the coded samples and construct something out of them that sounded like a voice.

In 1928, Dudley began to develop something he called the voice coder, or vocoder, a technology that would analyze speech, break it into its component parts and send the resulting code through a wire, instead of the sound signal itself. Then, once the code reached its destination, it would be reconstructed into a synthesized version of the original speech. The instrument he built broke the sound waves of human speech into ten bands of frequencies, and “sampled” each of those bands several times a second, creating a code that took up much less room than the sound wave itself. Inspired by artificial larynx technology, he created a synthesizer that could interpret the coded samples and construct something out of them that sounded like a voice. These two elements became what we know as a vocoder.

At that time, the sound the vocoder produced was incredibly unappealing, nothing like what we think of as a human voice. In creating his device, Dudley had failed to account for pitch. “Their vocoder always had a terrible pitch problem, for decades,” Mills says. “It was basically shelved until World War II, because the sound of it was just too irritating. No telephone consumer would want it.” Another reason for the vocoder’s robotic accent was the sample rate. Dudley’s vocoder sampled speech less than 50 times per second, whereas the average CD today samples sound at 44,000 times a second. The machine was beyond lo-fi.

During this experimental phase, Dudley did succeed in creating another device, the Voder. “The Voder was actually a manual operated talking machine,” says Dave Tompkins, author of the book How To Wreck A Nice Beach: The Vocoder from World War II to Hip-Hop. At the 1939 World’s Fair in Queens, New York, this bizarre talking keyboard made its debut. “They had a telephone operator [playing it]. She was using keys and foot pedals for consonants and vowels and had a sort of pitch modulator as well,” Tompkins says. The Voder was considered a novelty at the time. Neither of Dudley’s inventions seemed to hold much promise for practical application – something that was about to be disproved on an international stage.

World War II presented new problems for communication. Technology had advanced dramatically since World War I, and the Allies needed to make sure their correspondence was being transmitted securely.

“[The military] already had voice encryption, it was just no damn good,” says Craig Bauer, associate professor of mathematics at York College and author of the book Secret History: The Story of Cryptology. At the start of World War II, leaders including Franklin D. Roosevelt and Winston Churchill communicated using a tool called the A-3 scrambler, which Bauer says “was totally insecure. The Germans [had] broken it and were listening in.” The search was on for more advanced encryption technology, what experts referred to as “unbreakable speech.”

The National Defense Research Council began testing the vocoder as a potential means of encryption and realized they’d hit on something that could work. In the early ’40s, they commissioned Bell Labs to build massive terminals where vocoders could communicate signals securely across long distances. The project would be codenamed SIGSALY, and the first successful transmission between General Eisenhower and Churchill that used the system went out in 1943.

The technology that allowed SIGSALY to transmit “unbreakable speech” was complex. Random noise, in the form of unique records, was layered on top of the vocoded transmission. “In essence [the records] added random values to the digitized speech,” Bauer says. “At the receiving end, these random values were subtracted out again and the original voice could be recovered.” These records, played on two turntables at each SIGSALY terminal, needed to be exactly in sync with a duplicate pair of records at the receiving terminal. To stay in sync, the machine used crystal-powered, super precise clocks. Each set of records could only be played once, in order to keep the code unbreakable. When one record ended, another needed to be cued up and mixed seamlessly. If someone listened in, all they would hear was white noise, like a TV tuned to a nonexistent channel.

Eventually, 12 SIGSALY terminals dotted the world, from Churchill’s bunker at 9 Downing Street and the Pentagon to a ship off the coast of Japan and Algiers, in North Africa. The project went by many fantastic, comic book-esque names, including Project X and the Green Hornet, but the reality was that SIGSALY was incredibly cumbersome. The terminals were the size of a typical suburban home and weighed thousands of tons. They were expensive, too, costing up to a million dollars each, or more than $13 million today. They also were extremely hot, necessitating huge air conditioners to keep the equipment from melting, and the transmission quality hadn’t yet surpassed Dudley’s original design. Sampling at a rate of 50 times per second, the esteemed leaders who used the device came out sounding like Daffy Duck.

“The pitch was fluttering all over the place,” says Tompkins. “It would go into very high registers. The generals and heads of state – these iron-jawed men – really had a problem with that. They thought it sounded too ‘girly.’” Some adjustments were made to save the men’s pride, but Bauer says these thoughts were secondary to the project’s main intent. “They weren’t interested in catching subtle nuances, the tone of voice,” he says. “They just wanted it to be understandable on the receiving end. If they could’ve done it with an even lower resolution, they would have.”

Werner Meyer-Eppler was probably one of the first to realize the vocoder really had a future in electronic music.

After the war, SIGSALY became too much effort to maintain and all of the original terminals were scrapped. But while this part of the vocoder’s history was over, its other life, in music and entertainment, was just beginning. In the post-war decades, many tools that had served military purposes during wartime found other uses. Vocoder cousins were becoming common. In the late ’40s and ’50s this sound started to make its way into entertainment. “The talking train in Dumbo was a famous example,” says Tompkins. “It was also used to drive Joan Crawford insane in the film called Possessed.”

In the ’50s, electronics companies like Sennheiser and Siemens created electronic vocoders that were more functional than those invented by Dudley and used in the SIGSALY project, though they still produced a robotic, accented speech. Demo tapes of the experimental machines circulated until they made their way into the hands of musicians like Florian Schneider, who would go on to found Kraftwerk.

At the same time, Werner Meyer-Eppler, a scientist at the University of Bonn in Germany, was investigating the possibilities of electronic music. “Meyer-Eppler had a meeting with Homer Dudley just after the war and there was an exchange of information from the vocoder realm,” Tompkins says. “He was probably one of the first to realize the vocoder really had a future in electronic music.” At a famous speech in the city of Detmold in 1949, Meyer-Eppler began to spread his vision. “He played these electrical larynx recordings and played vocoder recordings,” Tompkins says. “He played recordings of Homer Dudley on the vocoder, singing ‘How Dry I Am’ and ‘Suzy Seashells.’” The experimental electronic pioneer Karlheinz Stockhausen was one of Meyer-Eppler’s students who was influenced by his work at this time. After Meyer-Eppler and others introduced the vocoder as a potential tool for musicians, it began to pop up In works by forward-thinking composers.

People are used to hearing a musical instrument like a piano or a tuba, and they’re used to hearing human voices. But those two are quite different things, and to hear them combined in a very odd way always caught the attention.

By the 1960s, the vocoder was starting to show promise. Wendy Carlos’s use of the vocoder in the soundtrack for A Clockwork Orange marked a breakthrough for the vocoder as an instrument. “Wendy Carlos doing her vocoder translation of Beethoven used in A Clockwork Orange was kind of a major moment,” Tompkins says. She also used vocoded voices in outtakes for The Shining’s score. In Kraftwerk’s early days, they played Carlos’s soundtrack as an intro at concerts.

The connection between the vocoder and space emerged around this time. A German TV show, Space Patrol, had a vocoded rocket launch countdown in its credits. The British synthesizer company ENS gave one of their huge, expensive vocoders to the BBC’s Radiophonic Workshop, where sound effects were developed to play on the radio. A radio engineer named Malcolm Clarke ended up using the vocoder to record a Ray Bradbury radio play called There Will Be Soft Rains, an excerpt from The Martian Chronicles. The play is about a smart house that survives the death of its inhabitants in a nuclear holocaust. “[The house] has these automated voices reminding you about the weather or when bills are due,” Tompkins says. “The [house is] still serving breakfast even though its occupants have been burned into the side of the building by a nuclear blast.” The vocoder enabled the house’s eerily calm robotic voice.

In 1975, the first real era of vocoder use in popular music began when the German pioneers Kraftwerk released their album Autobahn. “They were just starting to be successful at that time, but they didn’t have a huge amount of money, so they went out and commissioned a telephone engineer to build a vocoder for them,” says Mark Jenkins, a British electronic musician and author of the book Analog Synthesizers. “This was quite customized. It was in two large metal boxes and involved a lot of circuit boards. [The sound] really set the standard for the next 20 years to come.”

Kraftwerk’s unique sound and fascination with technology caught people’s attention. “[The vocoder makes] a fascinating and unusual sound,” says Jenkins. “People are used to hearing a musical instrument like a piano or a tuba, and they’re used to hearing human voices. But those two are quite different things, and to hear them combined in a very odd way always caught the attention.” This shock factor meant that many popular songs that used vocoder became hits: Electric Light Orchestra’s song “Mr. Blue Sky,” released in 1977, is just one example. Disco legend Giorgio Moroder also made heavy use of the vocoder on his albums Einzelgänger in 1975 and From Here To Eternity in 1977, setting the stage for the vocoder’s crossover to hip-hop and house music in the ’80s. Those DJs would play Moroder’s records as they invented entirely new ways to use turntables.

Different musicians created a variety of effects with this new instrument. “Some of the German musicians, like Klaus Schulze, started to use the vocoder as sort of background pad. You vaguely got the idea there was a human voice involved, but you couldn’t hear specific words,” Jenkins says. “Whereas other bands, like Yellow Magic Orchestra in Japan, Toto and other heavy rock and progressive rock bands in the States, were trying to make the words intelligible.”

Despite the vocoder’s popularity, in those days it was still far from accessible. Artists like Moroder and Herbie Hancock, whose records would influence early hip-hop and house artists, were using technology far out of those young artists’ price range. The Sennheiser vocoder cost $10,000 (about $45,000 today) or more. Because of these barriers, the vocoder’s sound first crept into hip-hop through sampled records. In the ’70s, artists like Sly Stone, Peter Frampton and Roger Troutman of Zapp used the talkbox, an instrument consisting of a plastic tube placed on the performer’s neck, to great effect. Like the vocoder, the talkbox allowed performers to alter their voices, shaping any sound played through the talkbox by simply mouthing words. To listeners, it’s difficult to tell the difference between the vocoder and the talkbox, so the instruments’ influence is intermingled.

By the end of the decade hip-hop pioneers like Afrika Bambaataa were playing these talkbox and vocoder records, along with those by artists like Moroder and Kraftwerk, at their early DJ gigs. Those records ended up influencing a movement of Afrofuturist hip-hop, drawing on the legacy of artists like Stone and Parliament-Funkadelic.

The vocoder was a way to inhabit another voice and claim it as your own

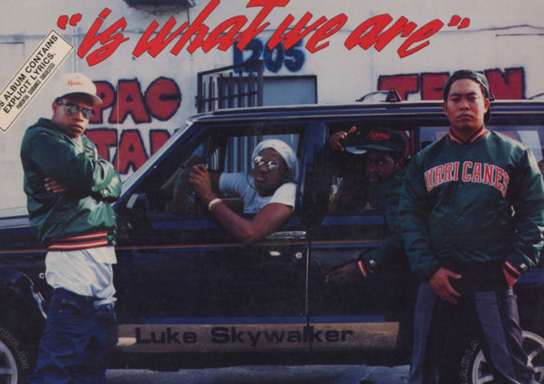

“I believe it cost a fortune when we first got it. I remember I spent every dime I had to buy a vocoder and synthesizer,” says Michael Jonzun, who founded the early hip-hop outfit Jonzun Crew and became one of the first artists to introduce vocoder to the mainstream. Jonzun, who grew up in Florida near Cape Canaveral before moving to the Roxbury neighborhood of Boston, was always fascinated by space. His first introduction to altered voices came from a neighbor who spoke through an artificial larynx. “It really scared me,” he remembers. “But once I found out what it was, it was pretty cool, because he sounded like a spaceman.” Later on, Jonzun’s introduction to Afrofuturism came when he served as a sound tech for the far-out performer Sun Ra when he played an extended engagement in Boston. After Jonzun’s vocoder-laced singles like “Pac Jam” became popular, people began to call him Spaceman. “[The vocoder] brought all of these futuristic things out in me,” he says. “It was just magic. It was the thing that was missing in my life for creativity. I feel like it really was an extension of who I am.”

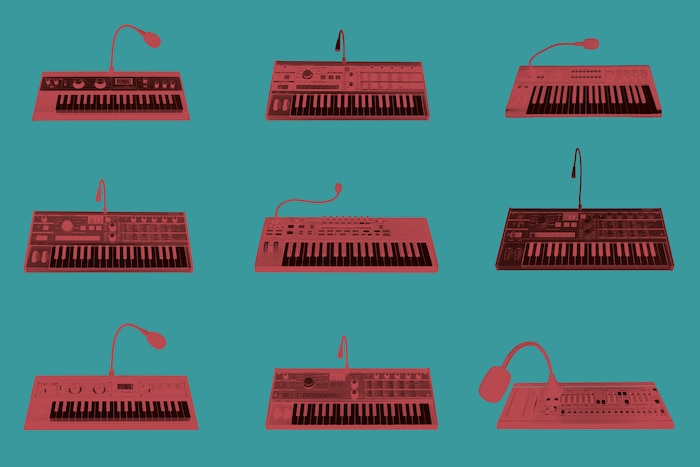

By the late ’70s, the price of vocoders had dropped dramatically due to newly developed cheap synth keyboards. The Korg VC-10 vocoder keyboard, produced in 1978, only cost about $500. “It was quite accessible to all sorts of musicians by that point,” Jenkins says.

The fascination with technology and space motifs in the funk and hip-hop communities continued to grow throughout the early ’80s. Baambaata, Jonzun, the graffiti artist and musician Rammellzee, Newcleus, Warp 9 and X-Clan dressed in futuristic, astronaut-like outfits, sometimes mixed with traditional African styles, and incorporated cosmological themes into their music. Warping their voices with the vocoder was another way to embody this fantastic persona. “The vocoder alienated the voice from the body,” says Tompkins. “[Hip-hop] was music being made by marginalized people, alienated people. Their voices are suppressed. [The vocoder was] a way to inhabit another voice and claim it as your own.”

The instrument’s sound was so distinctive that it fell in and out of fashion. In the late ’80s, Run-DMC started the trend that would eventually become gangsta rap, rejecting the outlandish sounds and styles of funk-influenced hip-hop in favor of a gritty aesthetic. But then, in the early ’90s – by which gangsta rap had grown into a massive industry – the talkbox made a comeback on Los Angeles rappers Dr. Dre and Tupac’s mega-hit “California Love.” Zapp’s Roger Troutman collaborated on the song, and even appeared in the wild, Mad Max-inspired video, bringing his music back into the popular consciousness.

By the mid-’90s, vocoder technology had finally progressed far enough since its birth 70 years earlier that it could be used imperceptibly. “If you sample at a high enough rate, you can essentially reconstruct the signal perfectly at the receiver,” says Mara Mills. Every call you’ve ever made on a cellphone has used vocoder-like, analysis-synthesis technology. That means the voices you’re hearing are not the original sound waves, but a reconstruction of coded voices produced by a tiny synthesizer. And cellphones aren’t the only communications technology that uses a descendant of the vocoder to transmit sound – far from it. “Most digital media is transmitted through analysis-synthesis today,” Mills says. “There’s lots and lots and lots of different kinds of vocoders today – there’s channel vocoders, voice-excited vocoders, there’s linear predictive vocoders. So it’s a suite of technologies today, not just one thing.”

The technology has also enabled the advancement of cochlear implants, which help people with hearing loss. “The issue with cochlear implants is that there’s a limited number of channels of information that are sent into the cochlea. The very early cochlear implants were single channel,” says Mills. “It’s a much, much reduced amount of information compared to the cochlea [inner ear] itself.” Though implants have improved dramatically since they were introduced, they still face some of the same problems present in early vocoders. “[People] describe their initial hearing through the cochlear implant as very metallic and or robotic,” Mills says. “It’s a learning process to hear through a cochlear implant, especially if you’ve heard in a different way before.”

Meanwhile, vocoders used as instruments have become ubiquitous. You no longer need to drop thousands or even hundreds of dollars to get a good vocoder effect. Every keyboard comes with a vocoder setting, and software like Logic or Cubase comes with vocoder-like effects as well.

When Homer Dudley began trying to synthesize the human voice, his inventions were the height of technological prowess. Even though his vocoder made a noise that was decidedly inhuman, it was the sound of the future.

For decades, the robotic voice of the vocoder indicated something beyond the human grasp, as the adaptation of Ray Bradbury’s dystopian stories or Wendy Carlos’s A Clockwork Orange soundtrack demonstrated. Today, we instead associate the future with the pristine, overproduced sounds of collectives like PC Music. The menace of artificial intelligence is associated with perfection, not garbled, accented, robotic speech. Technological precision now means that the technology is invisible – as in our cellphones, where we believe we’re hearing the other person’s real voice, not a vocoded synthesis. The vocoder is now, like vinyl records, a throwback to the nostalgic, human warmth of the past. When we hear an altered voice, in a mangled voice message left on a cell phone and sampled on a hip-hop song, or a vocoded hook on a R&B track, we recognize the humanity of that imperfection. The vocoder has come full circle: from the aspirational goal of perfectly recreating the human voice, to intentionally obscuring the voice, we now see it as a way of reaffirming the human.

Header image © RBMA