A Visual History of Spatial Sound

How technological innovations made sound physical

Humans have used sound to understand their physical surroundings since the Paleolithic era. Using the fundamentals of echolocation, our ancestors would shout into the darkness of a cave and judge its size and shape by the resonance of their vocals. “There’s literally maps of cave drawings to be found with very precise sonic information about what people experienced while interacting with the space with their voices,” says Paul Oomen, founder of 4DSOUND. The average spelunker these days probably wouldn’t know how to use vocalizations to assess space, he adds, “which points to the sensitivity and ingenuity of ancient men. Maybe we have even lost that ability to listen in that way, due to the totally different environment we live in.”

Over time, humans eventually learned how to use their physical surroundings to shape sound, which led to some pretty monumental technological advances. In the mid-1500s, religious music directors spatialized sound with choral and instrumental group arrangements; today, sound is physicalized by Oomen’s 4DSOUND systems, like the one installed at Berlin venue MONOM. As part of the Red Bull Music Academy Festival in Berlin, Oneohtrix Point Never will debut a new live performance on a custom rig made up of 102 omnidirectional speakers called the Symphonic Sound System. Oomen explains that visitors will be able to hear sound on a finer, more detailed level than “very rough macro-sonic information everyone can recognize,” like rhythm, tone and frequency. “It’s kind of a mirror,” he says. “It’s mirroring the way we perceive all the time, but makes those levels of perception that we’re usually not aware of tangible.”

Below, we take a look back at some of the most formative developments in the history of spatial sound.

Mid-1500s: Renaissance Antiphony

No one knows exactly where cori spezzati – the act of splitting up choirs into smaller groups singing together in call-and-response – originated, but most can agree that Belgian composer Adrian Willaert helped canonize it. Originally a law student who served in the court of Louis XIII, he was appointed the musical director of Venice’s gilded Basilica San Marco cathedral, where he took advantage of one of its most distinguishing features: two organs facing each other across the pews. So he ditched the period’s dominant vocal style, polyphony (multiple voices singing in unison), divided the church choir into two and directed each of them to sing in alternation, creating a lattice of voices over the worshippers. Willaert was also one of the first to recognize that each half of the split choir needed to be harmonically complete in and of itself, so that churchgoers would get the same effect no matter where they sat.

1950s: Modernist and musique concréte installations

In 1950, Pierre Schaeffer and his pupil Pierre Henry released Symphonie pour un homme seul, a mishmash of string snippets, vocal blurts and arrhythmic clangs that was as groundbreaking technologically as it was aesthetically. Schaeffer’s Paris studio, Radiodiffusion-Télévision Française, became a hub for engineers, composers and scientists to brainstorm possibilities for the newly commercially available tape recorder and multi-channel playback system. Despite these new technologies, advances in spatial sound design at the time still came down to the placement of the speakers in a room. Schaeffer arranged four channels through five speakers to the right, left, behind and above audience members. Though the technical abilities may have been limited compared to what we have now, Oomen says, “they’re very elaborate from the point of view of the way space is organized within the musical thinking.”

1960: Stockhausen’s Kontakte

In a 1972 lecture, Karl Stockhausen distilled the essence of spatial sound to a rapt audience at Oxford University. “Changing clockwise or counter-clockwise, or being at the left side in the rear or in the front, or alternating in a dialogue between rear left and front right, these are all configurations in space that are as meaningful as intervals in melody and harmony,” he said. The electroacoustic icon deployed almost this exact technique in Kontakte, the first composition ever written for a quadrophonic soundsystem. Written and recorded at the WDR Studio für elektronische Musik in Cologne, Germany, which he would manage in his later years, Stockhausen’s work distributes electronic tape, piano and percussion sections throughout four channels and speakers. Listening to the piece, its plinks, bleeps, grumblings and mutterings surge in and out of your field of binaural hearing so vividly that it’s almost as though they’re just over your shoulder.

1958: Iannis Xenakis’ Sound Installations

When Le Corbusier was asked to build the Philips-sponsored pavilion at the Brussels World Fair in 1958, the modern architecture pioneer reportedly replied, “I will not make a pavilion for you but an Electronic Poem and vessel containing the poem; light, color image, rhythm and sound joined together in an organic synthesis.” He also would not make it, period: Le Corbusier gave his protégé, French-Greek composer, designer and engineer Iannis Xenakis, total creative control. Xenakis determined the pavilion’s uniquely pitched exterior with mathematics, and shaped the interior like a cow’s stomach, so visitors would be “digested” as they passed through. As if that wasn’t immersive enough, Xenakis fitted the space with 350 speakers and 20 amplifiers, through which he played Edgar Varèse’s Poème électronique. It was a feat outdone only by himself with an installation at the Osaka Expo in 1970, featuring 800 speakers arranged around and above the audience, and underneath the seats.

1974: François Bayle’s Acousmonium at Maison de Radio

In the late 1950s, François Bayle was a country bumpkin from Bordeaux, woefully unprepared to join Paris’ elite musique concréte circle. After failing the composers’ entrance exam and being snubbed by Pierre Schaeffer, he showed them all by eventually managing the Radiodiffusion-Télévision Française. It’s unclear whether Bayle or Schaeffer coined the term “acousmatic,” meaning music that obscures the sound source, leaving the listener to draw their own conclusions about the origin of what they’re hearing. Bayle’s Acousmonium accomplishes this with an “orchestra of speakers” that’s still in use today. Though a variety of specialized speakers more effectively emphasizes different tones and timbres, giving a more textured and immersive listening experience, the pieces played over the Acousmonium were not spatialized with such a setup. “Most speakers, which have more of a directionality to them, tend to emphasize themselves, so they impose their own special character on any sound they produce,” explains Oomen. “No matter what fancy kind of processing we use to try and make it sound spatial, there’s always this speaker in between.”

1970s: Ambisonics

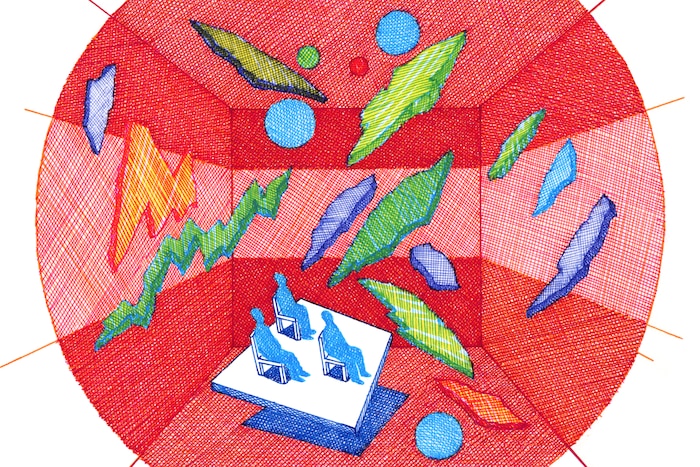

Ambisonics, a technique for the recording, mixing and playback of 360-degree audio, was conceived in the 1970s by a British research institution. It languished in obscurity until the boom in home entertainment systems in the 1990s, and today’s new frontiers in virtual reality video games, panoramic YouTube and Facebook videos and 3D multimedia installations. (In 2012, the BBC considered exploring its broadcast possibilities for the distinctly 2D medium of radio.) What differentiates Ambisonics from previously existing spatialized sound technologies is that it can be adapted for any number of speakers – it’s divorced from the so-called “one-channel, one-speaker” philosophy. And yet, in some ways, it’s the beta version of truly spatial sound. The software is free – Oomen coded with it in university, he says – and not optimally operational; for example, the sweet spot where gamers or headset-wearers feel the full effects of the “sphere of sound” is quite small.

1990s: Recombinant Media Labs

Naut Humon moved to San Francisco at the height of the ’60s, and the freewheeling, open spirit of that time and place seem to have stuck with him. The co-founder of Asphodel Records (which has put out releases by electronic artists including John Cage and Ryuichi Sakamoto) conceptualized his Sound Traffic Control initiative based on experiential concerts he threw in the 1970s and 1980s. Realized as a 1991 Tokyo exhibition with 800 speakers surrounding an abandoned air traffic control tower, STC eventually took root in SF as Recombinant Media Labs. Somewhere between an IMAX theater and an avant-garde music venue, it’s a permanent performance space with eight speakers overhead, eight at head height, 10 subwoofers linked to transducers under the floor and 10 sprawling screens for A/V installations. “It’s as close as you can come to being inside another person’s brain,” gushed at least one visitor.

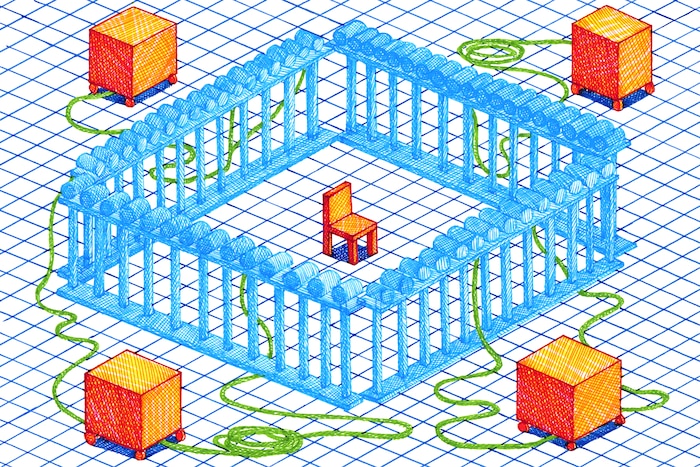

2006: Wave field synthesis

Perhaps best-known for his theory that light acts as a wave and not a particle, Dutch physicist, astronomer and mathematician Christiaan Huygens also put forth the Huygens-Fresnel principle, which states that every point on a wave front propagates additional wavelets. Invented in 1988 and demonstrated publicly in the mid-2000s, wave field synthesis relies not on the psychoacoustic phenomenon of “phantom sounds” – noises that don’t appear to belong to any one speaker – but with 192 speakers facing inward, using Huygens’ principle to approximate a real-world acoustic environment for the listeners inside. “I would say it’s really a scientific model, and as a scientific model it has a lot of value,” says Oomen, who was greatly inspired by wave field synthesis when conceptualizing 4DSOUND. “It taught me a lot about the mechanics of spatial sound.”

2012: Dolby Atmos

When R.E.M. remixed their landmark album Automatic for the People on Dolby Atmos for its 25th anniversary reissue last year, guitarist Peter Buck became a convert. “I’m usually a little ambivalent about things like that, but I was kind of blown away at what it sounded like,” he told Billboard. “It didn’t go as far as Dark Side of the Moon [remixed in Dolby 5.1 for its 30th anniversary in 2003], but it’s not that kind of record.” (Now, you can hear The Beatles’ Sgt. Pepper’s Lonely Hearts Club Band and INXS’s Kick in Atmos, too.) Typically reserved for movie theaters – with its 64 discrete speaker feeds and double that number of audio inputs – Dolby Atmos is a step up from its predecessor, as sound designers can now direct audio not only to speakers, but to specific locations around the room.

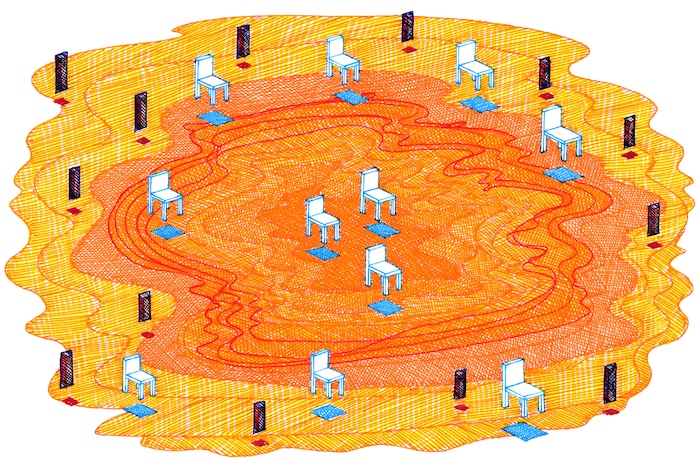

2012: 4DSOUND

Oomen became fascinated with spatial sound while studying classical music at the Conservatory of Amsterdam. “Rather than listening to a melody or different instruments, what I was really interested in is the kind of subtle energy that happens in between,” he recalls. An avid theatergoer, he also wanted to turn sound into “something physical, something real, that doesn’t come from speakers, that you can bring to stage and bring off the stage again.” With 16 columns housing omnidirectional speakers on the ceiling, above the head and underneath an acoustically transparent floor, the 4DSOUND system at MONOM physicalizes sound as if it were an object in space. This phenomenon is so finely tuned that attendees will have a different aural experience depending on where they’re standing in the space. “It can be a lifelong journey to learn how to work with space,” says Oomen. “I’m also still learning.”

Header image © Kevin Lucbert